Enriching Streaming Data from Redpanda

Data enrichment is a popular method for enhancing data to make it more suitable or useful for a specific purpose. It involves adding additional data from a third-party database or internal data source to the existing data. In this tutorial, you will learn how to write a Python dataflow for inline data enrichment using Redpanda, a streaming platform that uses a Kafka-compatible API (it can be replaced with Kafka easily). We will show you how to consume a stream of IP addresses from a Redpanda topic, enrich them with third-party data to determine the location of the IP address, and produce data to Kafka. This example will leverage the built-in kafka input and kafka output to do so.

Prerequisities

Kafka/Redpanda Kafka and Redpanda can be used interchangeably, but we will use Redpanda in this demo for the ease of use it provides. We will use docker compose to start a Redpanda cluster.

Python modules

bytewax[kafka]==0.16.* requests==2.28.0

Data

The data source for this example is under the data directory It will be loaded to the Redpanda cluster via the utility script in the repository.

Your takeaway

Enriching streaming data inline is a common pattern. This tutorial will show you how you can do this with Python and provide you with code you can modify to build your own enrichment pipelines.

Step 1. Redpanda Overview

Redpanda is a streaming platform that uses a kafka compatible API as a way to interface with the underlying system. Redpanda is a pub/sub style system where you can have many producers writing to one topic and many consumers subscribed to, and receiving the same data. Like Kafka, Redpanda has the concept of partitioned topics, which can be read from independently, and increase throughput of a topic.

By leveraging the Kafka-compatible API of Redpanda, Bytewax can consume from a Redpanda cluster in a similar way to Kafka. The code we will write in the following sections will be agnostic to the underlying streaming platform, so the tutorial can be adapted to work with Kafka as well.

Step 2. Constructing the Dataflow

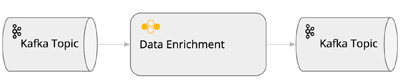

Every dataflow will contain, at the very least an input and an output. In this example the data input source will be a redpanda topic and the output sink will be another redpanda topic. Between the input and output lies the code used to transform the data. This is illustrated by the diagram below.

Let's walk through constructing the input, the transformation code and the output.

Redpanda Input

Bytewax has a concept of built-in, configurable input sources. At a high level, these are sources that can be configured and will be used as the input for a dataflow. The KafkaInput is one of the more popular input sources. It is important to note that a connection will be made on each worker, which allows each worker to read from a disjoint set of partitions for a topic.

To define our input in the Dataflow, we use the input method from the kafka operators (see the code snippet). This method takes multiple arguments: step_id, the dataflow object and the kafka API arguments. The step_id is used for recovery purposes and the dataflow object is needed in the case we have multiple input sources. The Kafka API arguments are where we will set up our dataflow to consume from Redpanda.

By configuring the input, we can ensure that our dataflow is consuming data from the correct Redpanda/Kafka topic and can handle any potential failures that may occur during the processing of the data.

The output of the input step is a stream of errors and ok messages.

A Quick Aside on Recovery: Bytewax can be configured to checkpoint the state of a running Dataflow. When recovery is configured, Bytewax will store offset information so that when a dataflow is restarted, input will resume from the last completed checkpoint. This makes it easy to get started working with data in Kafka, as managing consumer groups and offsets is not neccessary.

Data Transformation

Operators are methods that define how data will flow through the dataflow. Whether it will be filtered, modified, aggregated or accumulated. In this example we are modifying our data in-flight and will use the map operator. The map operator takes a Python function as an argument which will be called for every input item.

By leveraging the map operator, we can efficiently transform and enrich our streaming data inline using our favorite Python libraries.

Redpanda Output

To capture data that is transformed in a dataflow, we will use the output method in the Kafka operators. Similar to the input method, it takes a configuration parameters as arguments. As with the input, Bytewax has a built-in output configuration for Kafka KafkaOutput. We will configure our Dataflow to write the enriched data to a second topic: ip_address_by_location.

Now we can capture the results of our pipeline!

Kicking off execution

With the dataflow code written, the final step is to determine how the pipeline will be executed. Bytewax provides methods in the execution module that can be used to define the execution method, whether as a single-threaded process on a local machine, or as a scalable process across a Kubernetes cluster. You can find detailed information on how to use these methods in the API documentation.

Bytewax offers two types of workers: worker threads and worker processes. In most cases, it is recommended to use worker processes, as this approach can maximize the efficiency of data processing.

With Bytewax you can easily optimize your workflow for your data streaming needs, whether working on a small project or processing large volumes of data.

Step 3. Deploying the Dataflow

Deploying your dataflow can be done in several ways, depending on your needs. While you can run dataflows as a regular Python script for local testing > python -m bytewax.run dataflow:flow, the recommended way to work with dataflows in production is to use the waxctl command line tool to easily run the workloads on your cloud infrastructure or on the bytewax platform.

To run this tutorial, you can clone the repository to your machine and run the commands in the run.sh script, which will start a container running Redpanda and load it with sample data. From there, it will run the dataflow from the tutorial and enrich the data.

Deploying to AWS

If you're looking to deploy your Bytewax dataflow to a public cloud like AWS, you can do so easily with the waxctl command line tool and minimal configuration.

To get started, you'll need to have the AWS CLI installed and configured. Additionally, you'll need to ensure that your streaming platform (whether it's Redpanda or Kafka) is accessible from the instance.

waxctl aws deploy kafka-enrichment.py --name kafka-enrichment \

--requirements-file-name requirements-ke.txtHere, waxctl will configure and start an AWS EC2 instance and run your dataflow on the instance.

To see the default parameters, you can run the help command and see them in the command line (see the output snippet).

Summary

In this tutorial, we covered how to construct a dataflow to support inline data enrichment using Python and Bytewax.

We walked through the process of constructing the input, transformation, and output components of the dataflow.

We showed how easy it is to deploy the dataflow, whether for local testing purposes or on a public cloud like AWS, using the waxctl command line tool.

We want to hear from you!

If you have any trouble with the process or have ideas about how to improve this document, come talk to us in the #troubleshooting Slack channel!

Where to next?

Check other guides